import numpy as np

import plotly.graph_objects as go

# -----------------------------

# PARTE 0: CONFIGURACIÓN INICIAL

# -----------------------------

np.random.seed(2026)

tipo_cambio = 18.5

dt = 1 / 365 # Paso diario

# -----------------------------

# PARTE 1: CALIBRACIÓN DE PARÁMETROS

# -----------------------------

N_polizas = 6800

prima_base_por_poliza = 850.00

delta = 0.0015

X0_hist = 500e6 # Capital inicial histórico

U0_forecast = 520e6 # Capital inicial para pronóstico (ejemplo)

# Inflación y primas

inflacion_mensual = np.array([

0.52, 0.38, 0.38, 0.41, 0.45, 0.49, 0.55, 0.61, 0.58, 0.47, 0.42, 0.39,

0.35, 0.50, 0.37, 0.40, 0.44, 0.48, 0.53, 0.59, 0.56, 0.46, 0.41, 0.38

]) / 100.0

g = inflacion_mensual + delta

cum_g = np.cumsum(g)

primas_por_poliza_mxn = prima_base_por_poliza * np.exp(cum_g)

primas_totales_mxn = primas_por_poliza_mxn * N_polizas

c_t_mensual_usd = primas_totales_mxn / tipo_cambio

c_t_mensual_usd = np.round(c_t_mensual_usd, 2)

# --- Intensidades calibradas ---

lambda_meteo_annual = 6.0

lambda_sismo = 0.15 #0.08

seasonal_raw = [0.3, 0.3, 0.4, 0.6, 1.0, 1.8, 2.2, 2.5, 2.8, 2.3, 1.2, 0.5]

seasonal_norm = np.array(seasonal_raw) / np.mean(seasonal_raw)

# --- Parámetros de severidad (ahora dependientes del estado) ---

ceiling_per_policy = 5000.0

COEF_VARIACION_METEO = 0.1

COEF_VARIACION_SISMO = 0.2

# --- Rendimiento y volatilidad ---

r_t_mensual = np.array([

0.606, 0.602, 0.600, 0.597, 0.593, 0.589,

0.586, 0.582, 0.578, 0.574, 0.570, 0.566,

0.562, 0.559, 0.555, 0.551, 0.547, 0.543,

0.539, 0.535, 0.532, 0.528, 0.524, 0.520

]) / 100.0

sigma_t_mensual = np.array([

0.44, 0.47, 0.50, 0.53, 0.59, 0.74,

0.89, 1.34, 1.19, 0.98, 0.74, 0.68,

0.65, 0.60, 0.58, 0.60, 0.65, 0.74,

0.89, 0.82, 0.95, 0.86, 0.74, 0.68

]) / 100.0

# -----------------------------

# PARTE 2: FUNCIÓN AUXILIAR PARA LA COMPENSACIÓN

# -----------------------------

def expected_loss_per_event(deviation, pct_low, pct_high, n_pols):

"""

Calcula la pérdida esperada por un evento dado el estado actual.

"""

E_pct = (pct_low + pct_high) / 2

n_aff_mean = n_pols * E_pct #Valor esperado de la distribución uniforme para el número de afectados

# mu_per_policy = deviation <-- This line is now redundant as deviation is used directly

return n_aff_mean * deviation

# -----------------------------

# PARTE 3: SIMULACIÓN HISTÓRICA (Ene 2024 - Dic 2025) CON COMPENSACIÓN

# -----------------------------

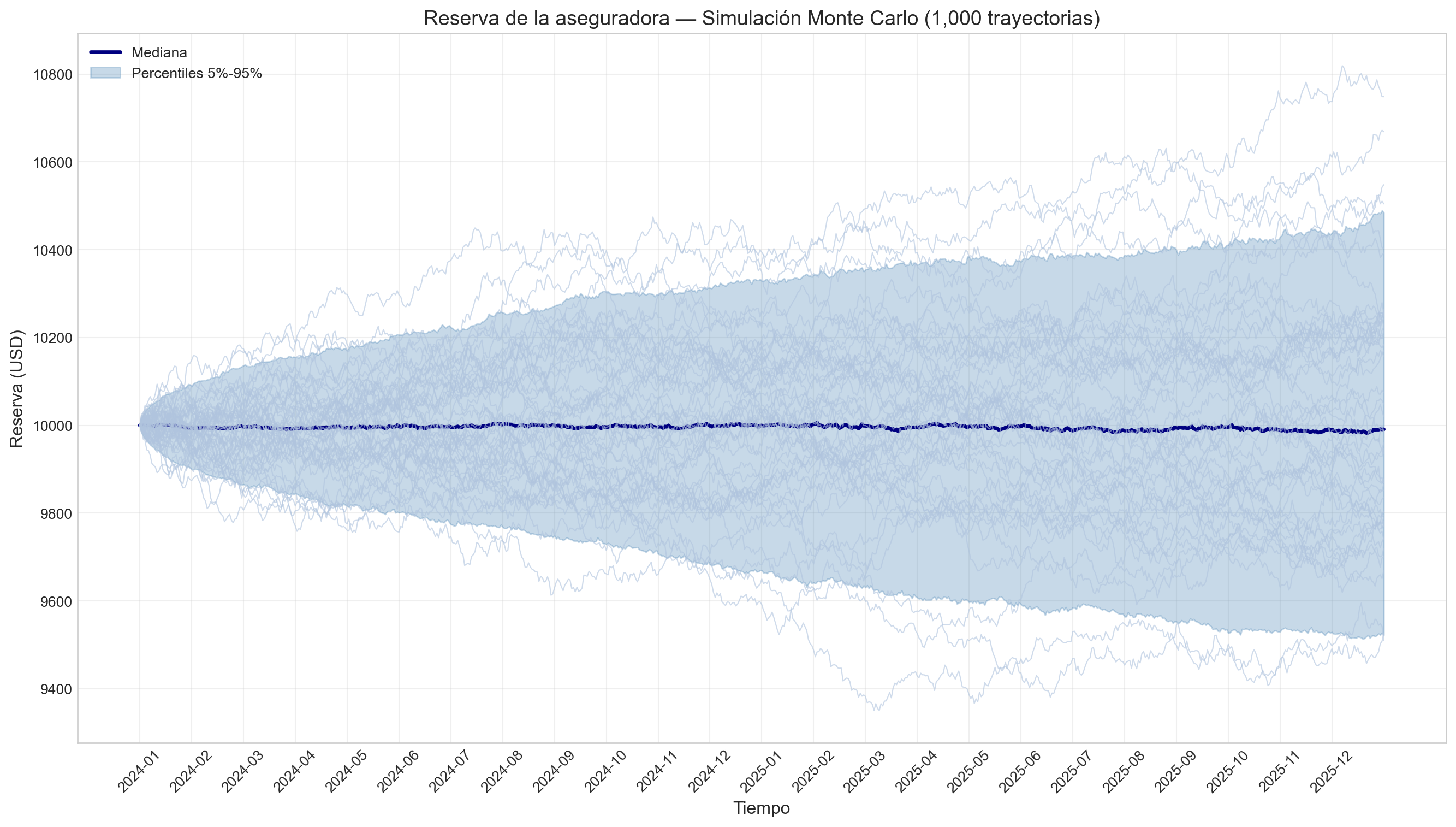

T_hist = 2.0

N_hist = int(T_hist / dt)

N_sim_hist = 1000

dias_hist = np.linspace(0, T_hist, N_hist + 1)

meses_enteros = np.arange(24)

r_hist = np.interp(dias_hist[:-1], meses_enteros, r_t_mensual)

sigma_hist = np.interp(dias_hist[:-1], meses_enteros, sigma_t_mensual)

c_hist = np.interp(dias_hist[:-1], meses_enteros, c_t_mensual_usd)

X_hist = np.zeros((N_sim_hist, N_hist + 1))

X_hist[:, 0] = X0_hist

print("Simulando periodo histórico (Ene 2024 - Dic 2025) con Milstein y compensación...")

for sim in range(N_sim_hist):

for i in range(N_hist):

t_dia = dias_hist[i]

mes_actual = int(t_dia) % 12

lam_meteo_dia = (lambda_meteo_annual / 365) * seasonal_norm[mes_actual]

lam_sismo_dia = lambda_sismo / 365

n_meteo = np.random.poisson(lam_meteo_dia)

n_sismo = np.random.poisson(lam_sismo_dia)

x_current = X_hist[sim, i]

deviation = (abs(x_current - X0_hist))/N_polizas

if deviation > ceiling_per_policy:

deviation = ceiling_per_policy

total_jump = 0.0

compensacion = 0.0

# --- Eventos Meteorológicos ---

if n_meteo > 0:

pct_affected = np.random.uniform(0.005, 0.02)

n_affected = max(1, int(N_polizas * pct_affected))

mu_per_policy = deviation

sigma_per_policy = COEF_VARIACION_METEO * mu_per_policy

losses = np.random.normal(mu_per_policy, sigma_per_policy, size=n_affected)

losses = np.clip(losses, 0.0, None)

total_jump += np.sum(losses)

# Compensación meteorológica

E_loss_meteo = expected_loss_per_event(

deviation, 0.005, 0.02, N_polizas

)

compensacion += (-1)*lam_meteo_dia * E_loss_meteo

# --- Eventos Sísmicos ---

if n_sismo > 0:

pct_affected = np.random.uniform(0.01, 0.10)

n_affected = max(1, int(N_polizas * pct_affected))

mu_per_policy = deviation

sigma_per_policy = COEF_VARIACION_SISMO * mu_per_policy

losses = np.random.normal(mu_per_policy, sigma_per_policy, size=n_affected)

losses = np.clip(losses, 0.0, None)

total_jump += np.sum(losses)

# Compensación sísmica

E_loss_sismo = expected_loss_per_event(

deviation, 0.01, 0.10, N_polizas

)

compensacion += (-1)*lam_sismo_dia * E_loss_sismo

# --- Actualización con Milstein + Compensación ---

dW = np.sqrt(dt) * np.random.randn()

drift_base = c_hist[i] * dt + r_hist[i] * x_current * dt

drift_compensado = drift_base + compensacion

diffusion = sigma_hist[i] * x_current * dW

milstein_corr = 0.5 * (sigma_hist[i]**2) * x_current * (dW**2 - dt)

X_hist[sim, i+1] = x_current + drift_compensado + diffusion + milstein_corr - total_jump

U0_forecast = np.median(X_hist[:, -1])

print(f"Condición inicial para pronóstico (Ene 2026): ${U0_forecast/1e6:.2f}M")

# -----------------------------

# PARTE 4: SIMULACIÓN DEL PRONÓSTICO (Ene 2026) CON COMPENSACIÓN

# -----------------------------

forecast_days = 30

mes_pronostico = 0

# Promedios para enero (mes 0 y mes 12)

c_ene_avg = (c_t_mensual_usd[0] + c_t_mensual_usd[12]) / 2

r_ene_avg = (r_t_mensual[0] + r_t_mensual[12]) / 2

sigma_ene_avg = (sigma_t_mensual[0] + sigma_t_mensual[12]) / 2

sf_ene_avg = seasonal_norm[0] # Enero es el mes 0

c_daily_fc = np.full(forecast_days, c_ene_avg / 30.0)

r_daily_fc = np.full(forecast_days, r_ene_avg)

sigma_daily_fc = np.full(forecast_days, sigma_ene_avg)

lambda_meteo_dia_fc = (lambda_meteo_annual / 365) * sf_ene_avg

lambda_sismo_dia_fc = lambda_sismo / 365

np.random.seed(2027)

N_sim_fc = 1000

U_forecast = np.zeros((N_sim_fc, forecast_days + 1))

U_forecast[:, 0] = U0_forecast

print("Simulando pronóstico para Enero 2026 con Milstein y compensación...")

for sim in range(N_sim_fc):

for i in range(forecast_days):

u_current = U_forecast[sim, i]

deviation = (abs(u_current - U0_forecast))/N_polizas

if deviation > ceiling_per_policy:

deviation = ceiling_per_policy

total_jump = 0.0

compensacion = 0.0

# --- Simular eventos ---

n_meteo = np.random.poisson(lambda_meteo_dia_fc)

n_sismo = np.random.poisson(lambda_sismo_dia_fc)

# --- Eventos Meteorológicos ---

if n_meteo > 0:

pct_affected = np.random.uniform(0.005, 0.02)

n_affected = max(1, int(N_polizas * pct_affected))

mu_per_policy = deviation

sigma_per_policy = COEF_VARIACION_METEO * mu_per_policy

losses = np.random.normal(mu_per_policy, sigma_per_policy, size=n_affected)

losses = np.clip(losses, 0.0, None)

total_jump += np.sum(losses)

# Compensación meteorológica

E_loss_meteo = expected_loss_per_event(

deviation, 0.005, 0.02, N_polizas

)

compensacion += (-1)*lambda_meteo_dia_fc * E_loss_meteo

# --- Eventos Sísmicos ---

if n_sismo > 0:

pct_affected = np.random.uniform(0.01, 0.10)

n_affected = max(1, int(N_polizas * pct_affected))

mu_per_policy = deviation

sigma_per_policy = COEF_VARIACION_SISMO * mu_per_policy

losses = np.random.normal(mu_per_policy, sigma_per_policy, size=n_affected)

losses = np.clip(losses, 0.0, None)

total_jump += np.sum(losses)

# Compensación sísmica

E_loss_sismo = expected_loss_per_event(

deviation, 0.01, 0.10, N_polizas

)

compensacion +=(-1)* lambda_sismo_dia_fc * E_loss_sismo

# --- Actualización con Milstein + Compensación ---

dW = np.sqrt(dt) * np.random.randn()

drift_base = c_daily_fc[i] * dt + r_daily_fc[i] * u_current * dt

drift_compensado = drift_base + compensacion

diffusion = sigma_daily_fc[i] * u_current * dW

milstein_corr = 0.5 * (sigma_daily_fc[i]**2) * u_current * (dW**2 - dt)

U_forecast[sim, i+1] = u_current + drift_compensado + diffusion + milstein_corr - total_jump

# -----------------------------

# PARTE 5: CÁLCULO DE ESTADÍSTICAS

# -----------------------------

median_hist = np.median(X_hist, axis=0) / 1e6

p05_hist = np.percentile(X_hist, 5, axis=0) / 1e6

p95_hist = np.percentile(X_hist, 95, axis=0) / 1e6

median_fc = np.median(U_forecast, axis=0) / 1e6

p05_fc = np.percentile(U_forecast, 5, axis=0) / 1e6

p95_fc = np.percentile(U_forecast, 95, axis=0) / 1e6

# -----------------------------

# PARTE 6: DETECCIÓN DE SALTOS EN LA MEDIANA

# -----------------------------

def detect_jumps(median_series):

"""Detecta saltos en una serie temporal usando umbral de 3 desviaciones estándar."""

median_usd = median_series * 1e6

log_returns = np.log(median_usd[1:] / median_usd[:-1])

threshold = 3 * np.std(log_returns)

jump_flags = np.abs(log_returns) > threshold

jump_indices = np.where(jump_flags)[0] + 1

jump_times = np.arange(len(median_series))[jump_indices]

jump_values = median_series[jump_indices]

return jump_times, jump_values

# Detectar saltos

time_historical = np.arange(N_hist + 1)

jump_times_hist, jump_values_hist = detect_jumps(median_hist)

time_forecast = np.arange(N_hist, N_hist + forecast_days + 1)

jump_times_fc, jump_values_fc = detect_jumps(median_fc)

# -----------------------------

# PARTE 7: GRÁFICA INTERACTIVA CON PLOTLY

# -----------------------------

fig = go.Figure()

# Área de incertidumbre histórico

fig.add_trace(go.Scatter(

x=np.concatenate([time_historical, time_historical[::-1]]),

y=np.concatenate([p95_hist, p05_hist[::-1]]),

fill='toself',

fillcolor='rgba(144, 238, 144, 0.3)',

line=dict(color='rgba(144, 238, 144, 0)'),

hoverinfo="skip",

showlegend=True,

name='Histórico (5%-95%)'

))

# Mediana histórica

fig.add_trace(go.Scatter(

x=time_historical,

y=median_hist,

mode='lines',

line=dict(color='darkgreen', width=2.5),

name='Mediana (Histórico)'

))

# Saltos en la mediana histórica

if len(jump_times_hist) > 0:

fig.add_trace(go.Scatter(

x=jump_times_hist,

y=jump_values_hist,

mode='markers',

marker=dict(color='red', size=8, symbol='x'),

name='Saltos (Histórico)'

))

# Área de incertidumbre del pronóstico

fig.add_trace(go.Scatter(

x=np.concatenate([time_forecast, time_forecast[::-1]]),

y=np.concatenate([p95_fc, p05_fc[::-1]]),

fill='toself',

fillcolor='rgba(205, 92, 92, 0.25)',

line=dict(color='rgba(205, 92, 92, 0)'),

hoverinfo="skip",

showlegend=True,

name='Pronóstico (5%-95%)'

))

# Mediana del pronóstico

fig.add_trace(go.Scatter(

x=time_forecast,

y=median_fc,

mode='lines',

line=dict(color='darkred', width=2.5),

name='Mediana (Pronóstico)'

))

# Saltos en la mediana del pronóstico

if len(jump_times_fc) > 0:

fig.add_trace(go.Scatter(

x=jump_times_fc + N_hist,

y=jump_values_fc,

mode='markers',

marker=dict(color='blue', size=10, symbol='star'),

name='Saltos (Pronóstico)'

))

# Línea divisoria

fig.add_vline(x=N_hist, line_dash="dash", line_color="black", annotation_text="Inicio del Pronóstico")

# Configuración final

months_full = [f"{y}-{m:02d}" for y in [2024, 2025] for m in range(1, 13)]

month_labels = months_full[::2]

month_ticks = np.arange(0, 720, 60)

all_ticks = np.concatenate([month_ticks, [N_hist + 30]])

all_labels = month_labels + ["2026-01"]

# Configuración final optimizada

# Configuración final optimizada

fig.update_layout(

title=dict(

text='<b>Reservas de la Aseguradora: Histórico (2024–2025) + Pronóstico Ene 2026</b>',

subtitle=dict( # ✅ CORRECCIÓN: subtitle debe ser un diccionario

text='<i>Modelo Cramér-Lundberg extedido con Milstein, Siniestros Realistas y Detección de Saltos (México)</i>'

),

x=0.5,

xanchor='center',

y=0.95,

pad=dict(t=10, b=10)

),

xaxis_title="<b>Tiempo</b>",

yaxis_title="<b>Reservas (Millones USD)</b>",

xaxis=dict(

tickmode='array',

tickvals=all_ticks,

ticktext=all_labels,

tickangle=45,

showgrid=True,

gridcolor='lightgray'

),

yaxis=dict(

showgrid=True,

gridcolor='lightgray',

zeroline=True,

zerolinecolor='black'

),

legend=dict(

orientation="h",

yanchor="bottom",

y=1.12,

xanchor="right",

x=0.99,

font=dict(size=9),

bgcolor='rgba(255,255,255,0.8)'

),

hovermode="x unified",

template="plotly_white",

height=500,

width=800,

margin=dict(

l=80,

r=70,

t=150,

b=100

),

plot_bgcolor='rgba(245,245,245,0.5)'

)

fig.show()

# -----------------------------

# PARTE 8: RESUMEN DEL PRONÓSTICO

# -----------------------------

print(f"\nPronóstico para Enero 2026:")

print(f"- Reserva inicial: ${U0_forecast/1e6:.2f}M")

print(f"- Mediana final: ${median_fc[-1]:.2f}M")

print(f"- IC (5%-95%): [${p05_fc[-1]:.2f}M, ${p95_fc[-1]:.2f}M]")